Now we will send JSON formatted data and see how we deal with the schema. If you send data to an Elasticsearch index, the first record that arrives is used to detrmine the schema. So Elasticsearch does a dynamic mapping. But this might not always work 100%. The alternative is to define a schema/mapping manually. We will have a look at both.

The geonames database I use has about 17 million rows. That takes a while to ingest, so I created a separate file, which only contains 10000 records. I will use this with logstash for this example. Also, I am running this on Linux, so if you use Windows it might work a little bit different in terms of commands and paths, etc.

Before we continue, make sure you installed Elasticsearch and also Kibana. Kibana is the frontend for creating visualizations and dashboards. Download the relevant packages for your operating system from the Elastic website, install them and then run both.

I named my Logstash file: geonames_1.yml and it is located in the Logstash config folder. The complete code is listed at the bottom of part 1 of this post. Adjust the path as appropriate for your system. I also changed the file to use the index "geonames_01" in Elasticsearch. To run it with Logstash do this:

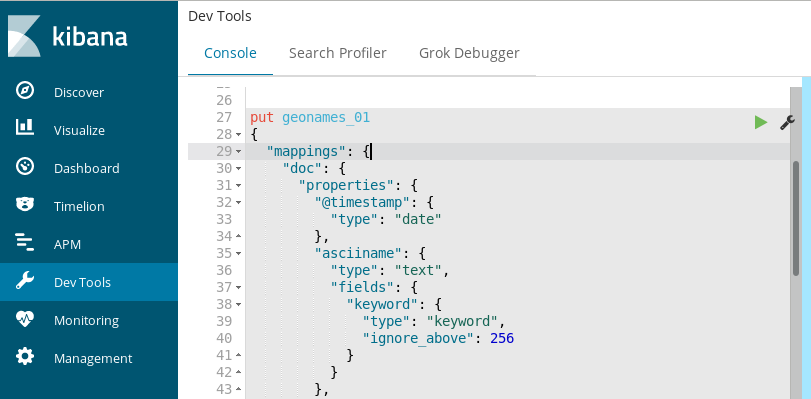

Now in Logstash we have converted the latitude and longitude position to a float value. And they are like this in the mappings file shown above. To make use of the geo-indexing features of Elasticsearch, the position field needs to defined as type "geo_point" in Elasticsearch.

Once the index mapping has been defined for a field, it can not be changed. So I will go ahead and delete the "geonames_01" index and we will manually add a different schema which properly maps the position field.

The popup where you saw the definition of the mapping for our index has a button labeled "Manage". Click on it and then select "Delete Index" to delete it.

Click on the "Dev Tools" link on the left side of the browser window. Per default you will be on the "Console" tab. In the left part of the console paste the code from below:

Still in the "Console" tab, click on the green icon to execute the code.

So let's go back to Logstash and run the config file once again.

That's all for the second part. The third part will concentrate on creating visualizations and a dashboard in Kibana on top of the data we imported.

Here is part 3:

Carpe Diem

RSS Feed

RSS Feed