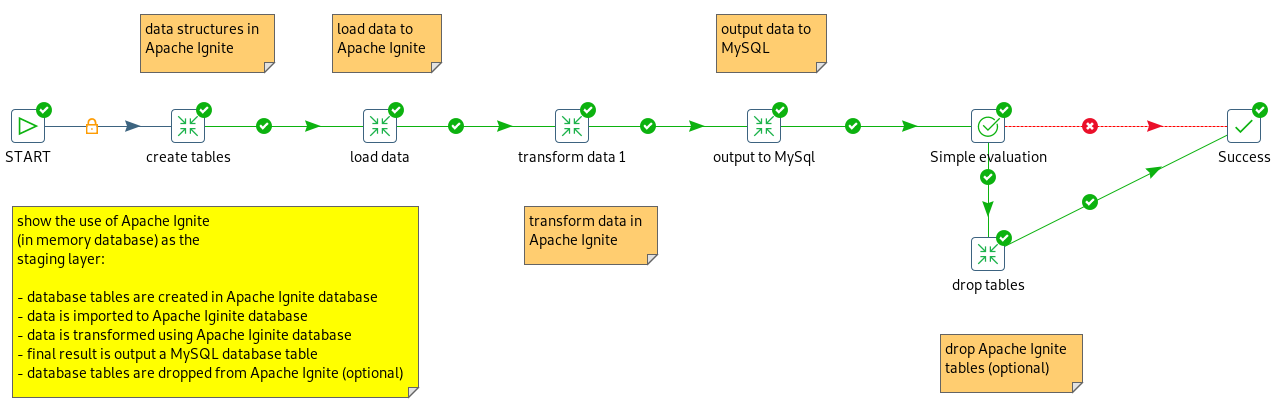

So the first part discussed the general setup and the why it can be interesting to use Apache Ignite as an in-memory database for an ETL process: it acts as an in-memory storage layer for your data transformations.

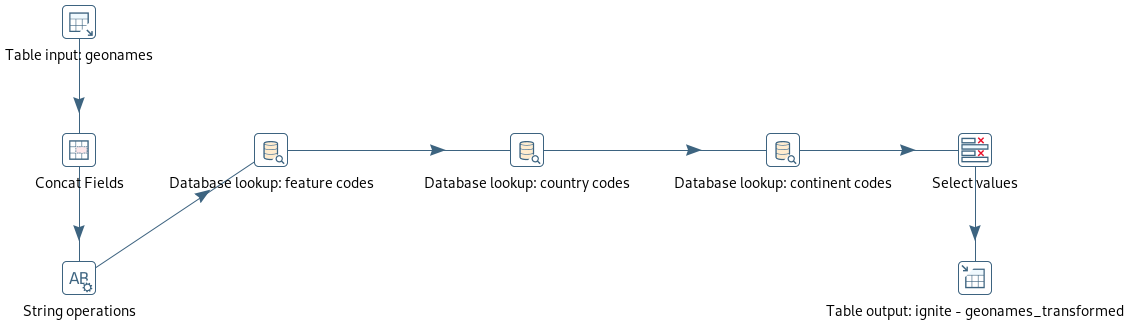

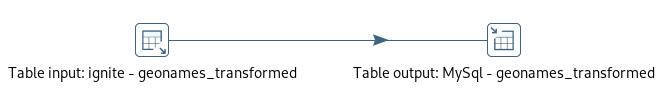

Here is again the screenshot of the completed ETL

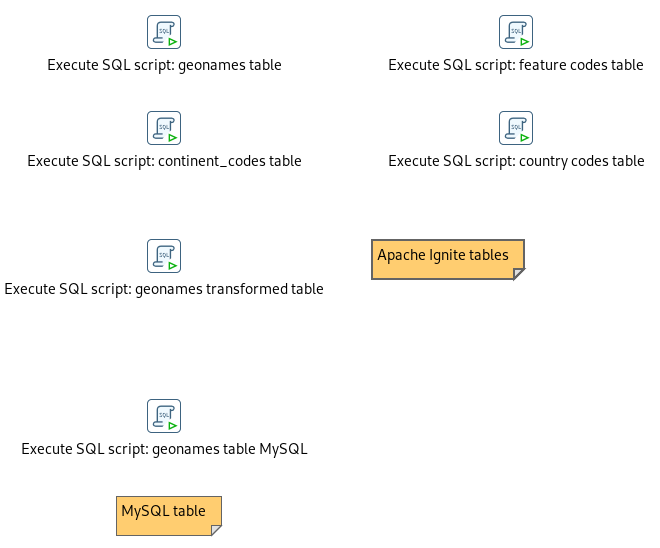

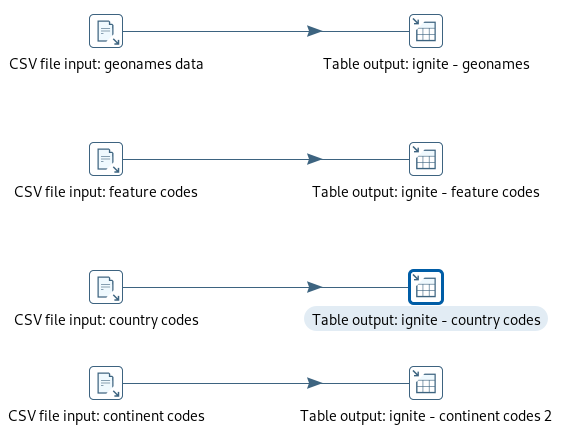

At the beginning the tables and structures in Apache Ignite are created using ExecuteSQL Script steps. This is what the complete transformation looks like:

Here is an example - just a normal create table statement.

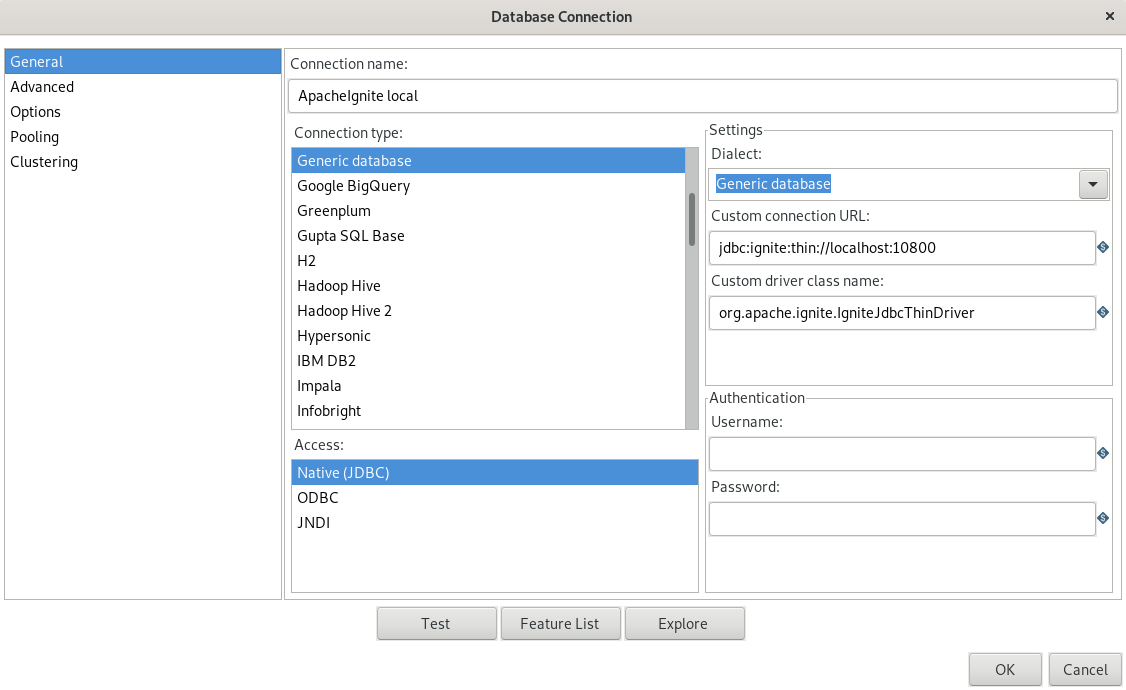

This might not be so interesting, but I wanted to find out if a whole processing chain works without issues and that PDI and Ignite work well together. And they do! It is rather easy to replace an existing connection to a traditional database, with one to Apache Ignite. As it supports JDBC and SQL there won't be a big effort to redesign the transformation job. All steps I have tested work out of the box with Ignite: creating DDL statements, querying, deleting, etc.

Issues:

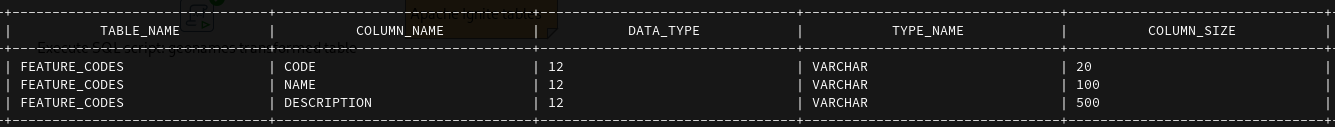

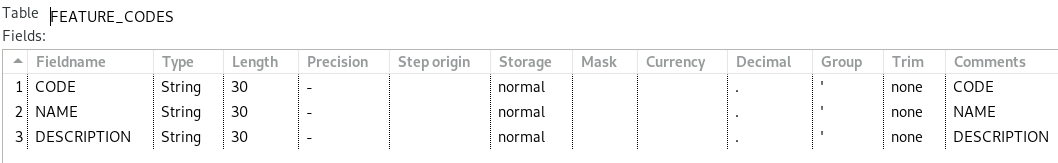

There is a minor issue though, which I found: when PDI reads the data from Ignite, then all columns of type "String" have a length of "30". All - independent if they are defined shorter or longer in the database schema. Here is the create table statement of one of the tables:

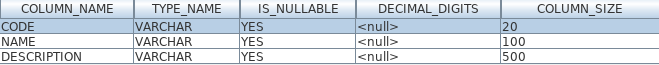

I have cross-checked this with a different SQL tool (Squirrel): I created a connection to Ignite using the same JDBC driver and retrieved the table definition details. This is what Squirrel shows:

As the size/length of the columns is wrong, one will have to manually change these in a "Select Values" step, so that e.g. when the data is output to a table, PDI generates the correct DDL statement, with the correct lengths.

Hope this helps to get an overview or get started. It's worth a try especially if you have a good use case for processing data in memory.

Carpe Diem

RSS Feed

RSS Feed