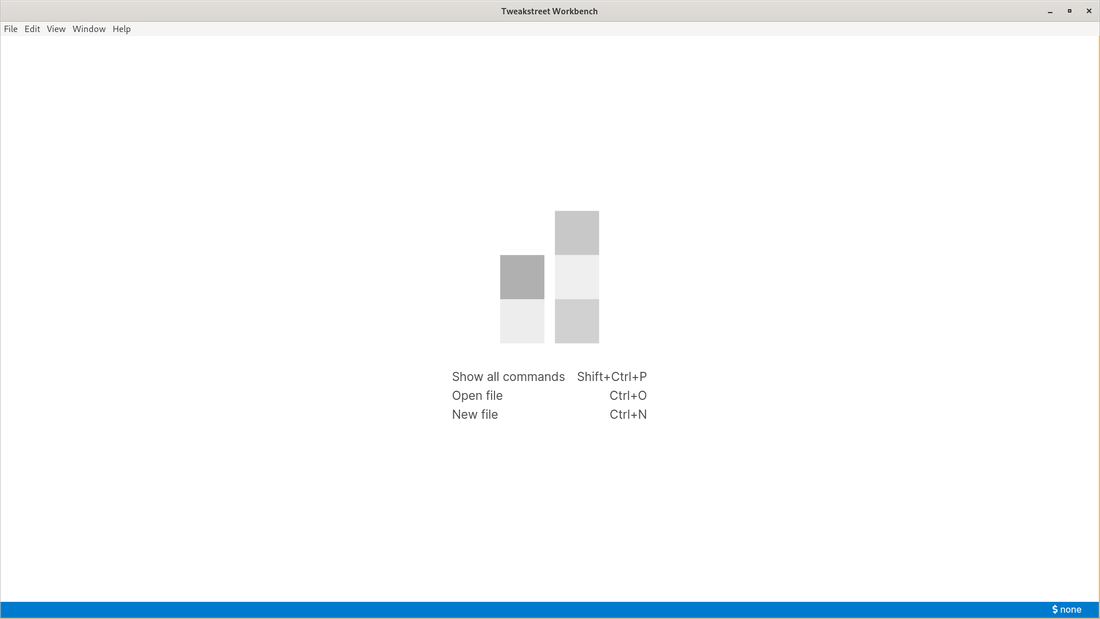

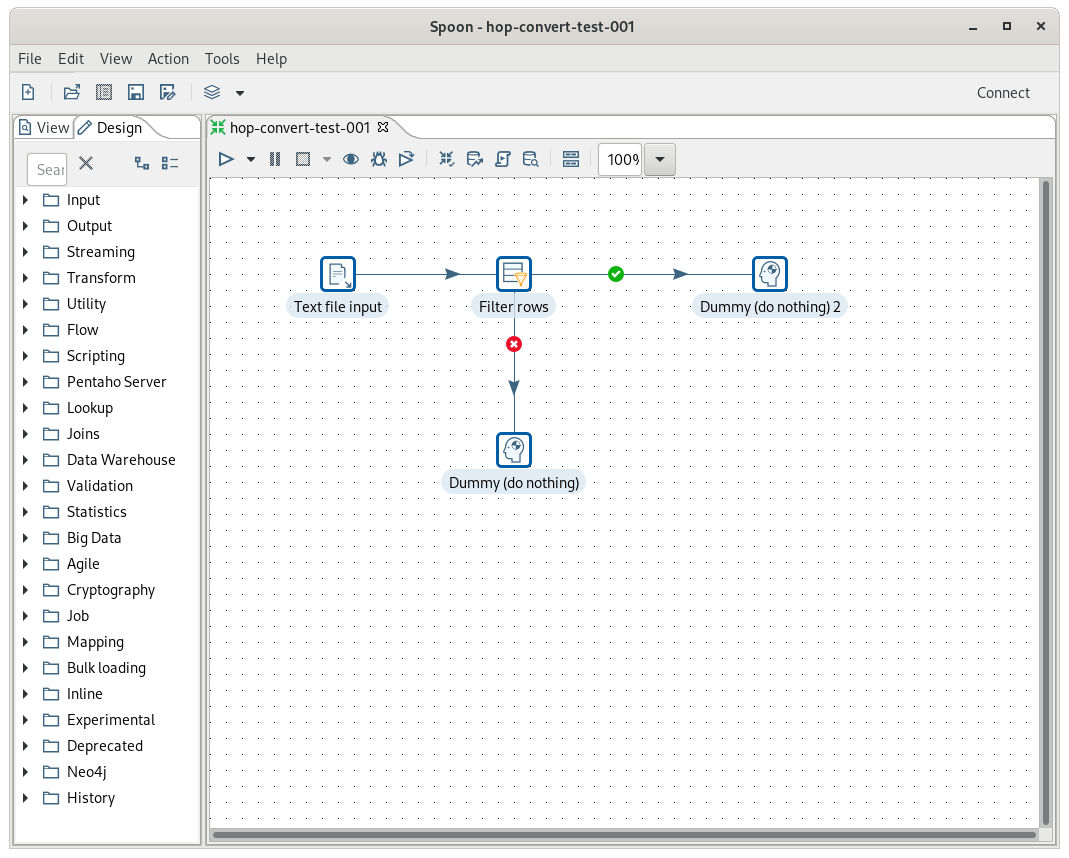

Once the application runs you see this:

Note, that most of the actions also have as assigned keyboard shortcut. The application has a consistent concept of making life easier for users by using the keyboard.

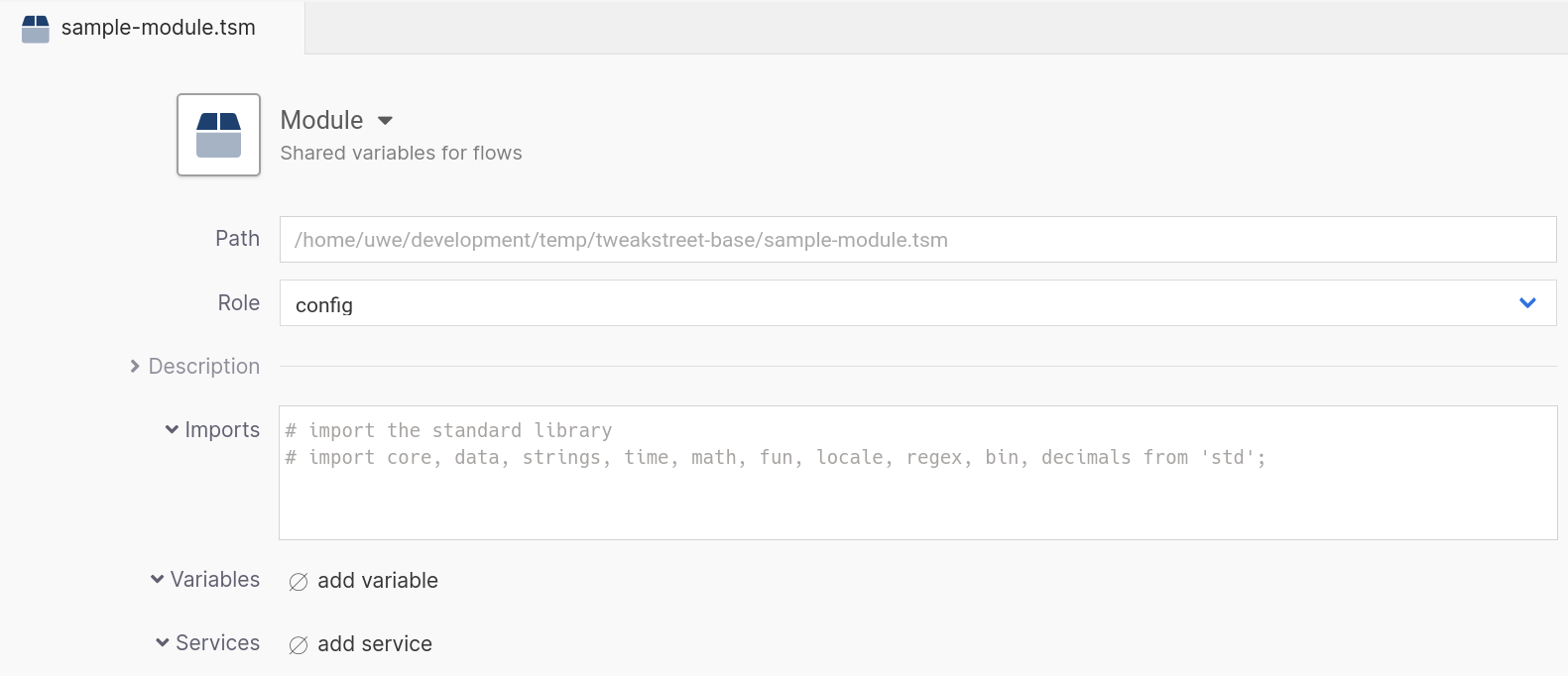

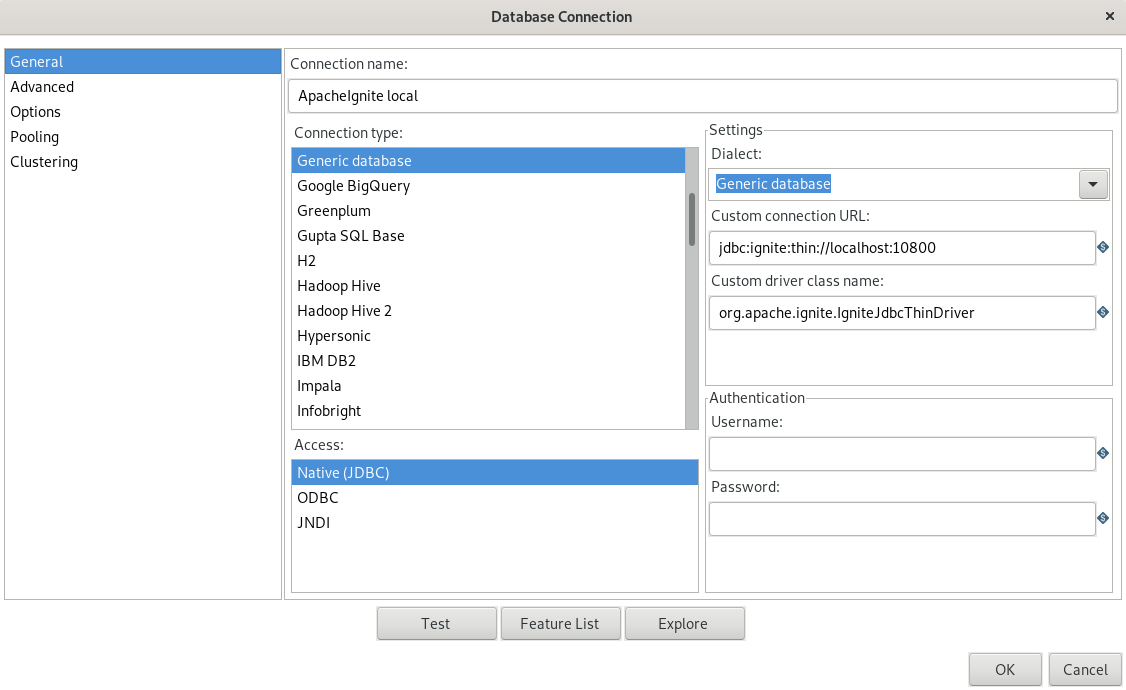

Let's create a config module. It will be used to define variables and services (e.g. database connections) which we do not want to hardcode in the data flow. To do so select "File" >> "New..." from the menu, or use STRG+N or right-click the canvas and select "New...". Select "Module". A dialog appears to enter the details. For the moment we just select "config" in the line labeled "Role". The select "File" >> "Save" or use Strg+S. The dialog to specify the filename for the module appears. Note that it opens the defined workspace folder so we don't have to navigate through the complete filesystem. Give the file an appropriate name like e.g. "sample-module.tsm".

Here is the screenshot after saving the module:

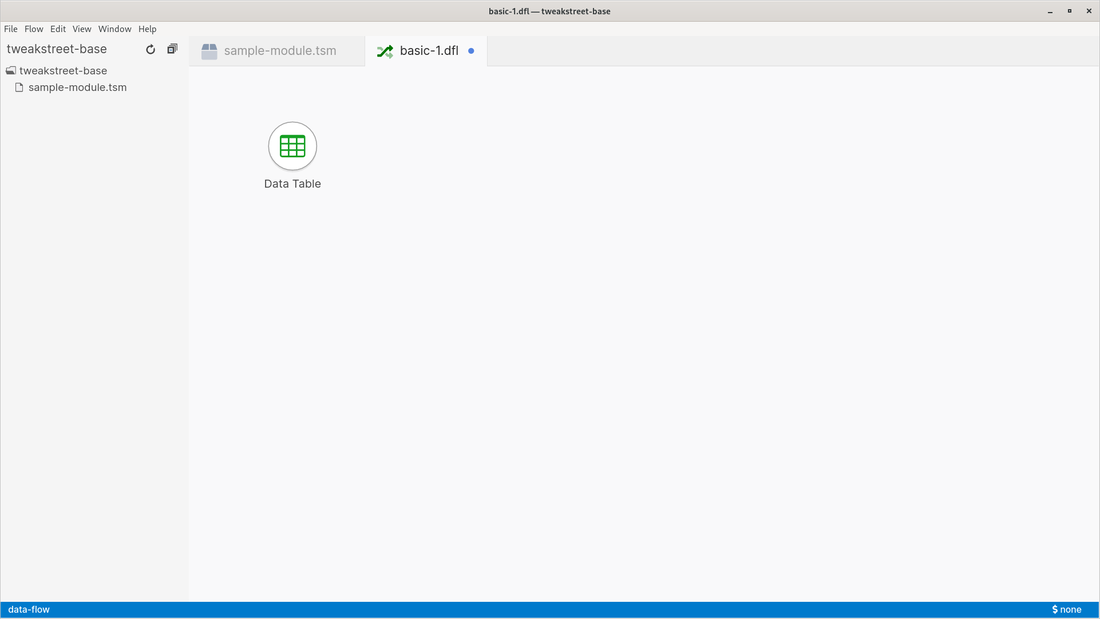

Next we add a data flow. Press Strg+N and select "Data Flow" and press enter. Let's quickly save it by pressing Strg+S. Give it a name of your choice. Data flows have a standard extension of ".dfl".

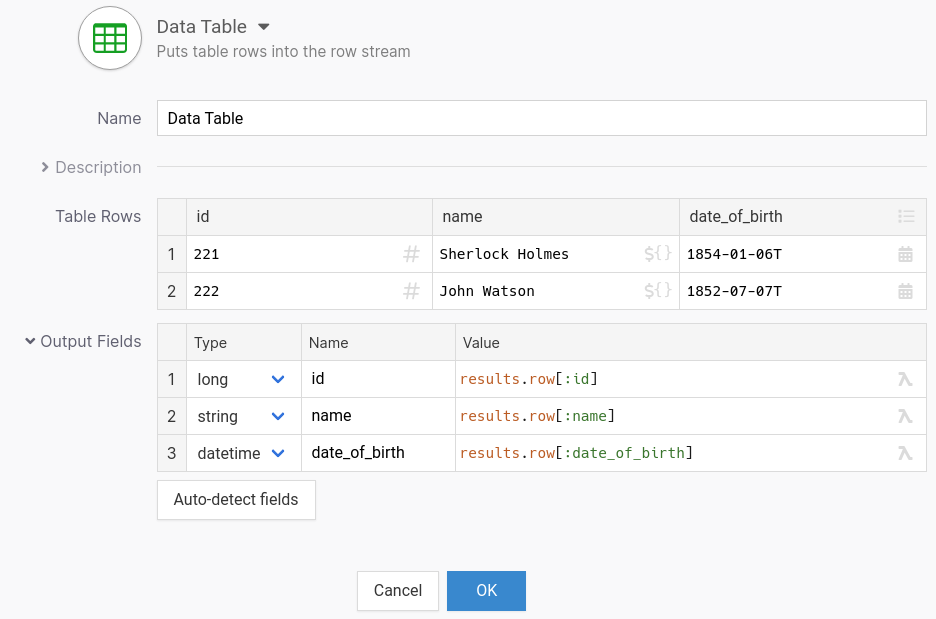

Press Strg+I to insert a step to the flow. In the dialog that pops up type "d-a" and you will see that the list gets filtered. At the top you have "Data Table" displayed. Press enter or click it to add it to the canvas.

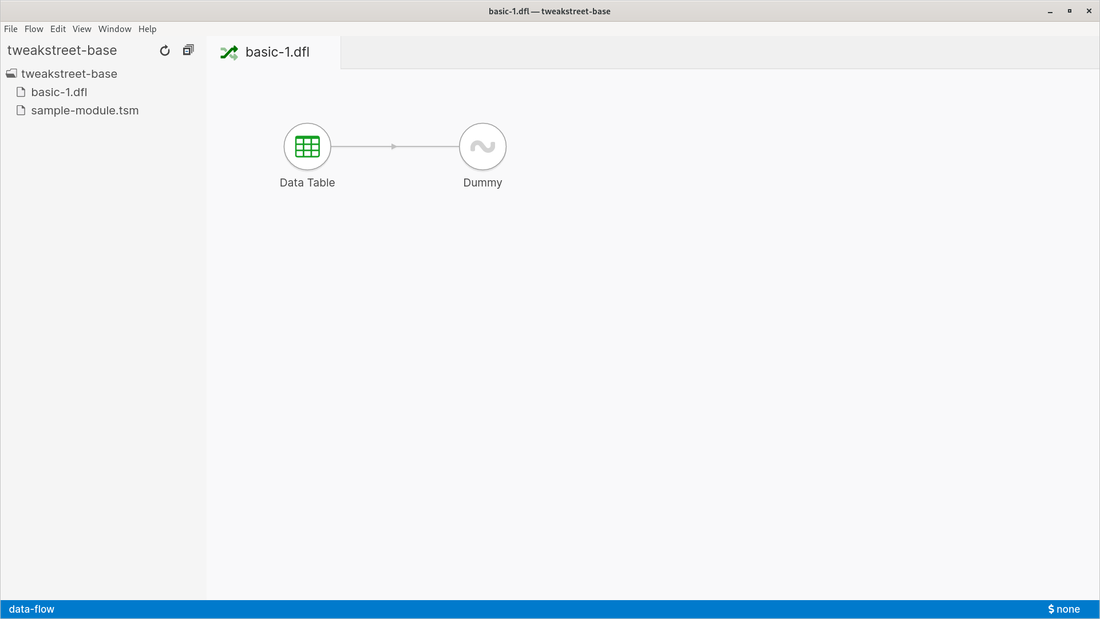

You can mark steps by clicking on them or by drawing a rectangle. Once selected you can move or cut/copy/paste the selection. You have standard undo/redo functionality available.

Press Strg+I again to add another step to the canvas. Type "d-u" and the Dummy step will appear at the top. Select it to add it to the canvas. The dummy step has no own functionality, it does not change the incomming data in any shape or form. Now we need to connect the two steps. Either right click the Data Table step and select "Connect", then move the mouse to the other step and click it, or press the Shift key, click the Data Table step, move the mouse over the other step and release the mouse button.

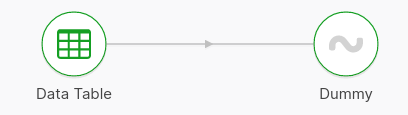

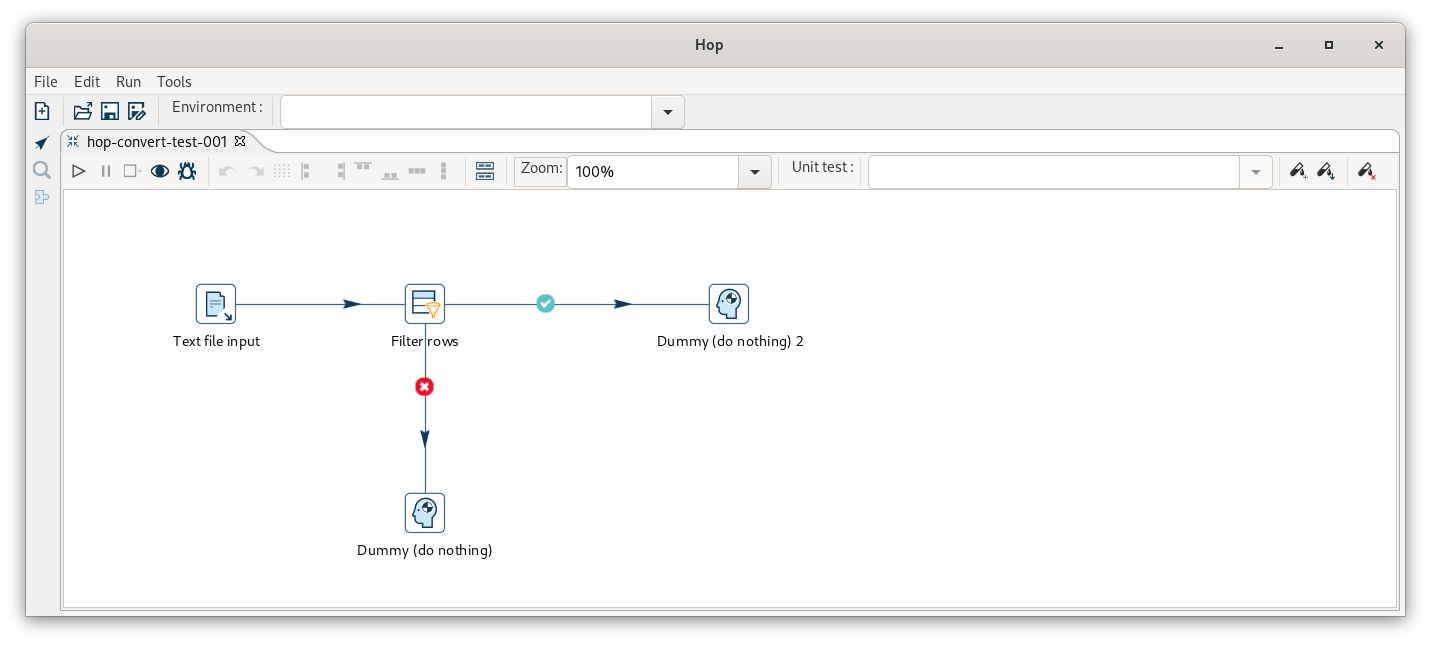

Here is what our data flow looks like:

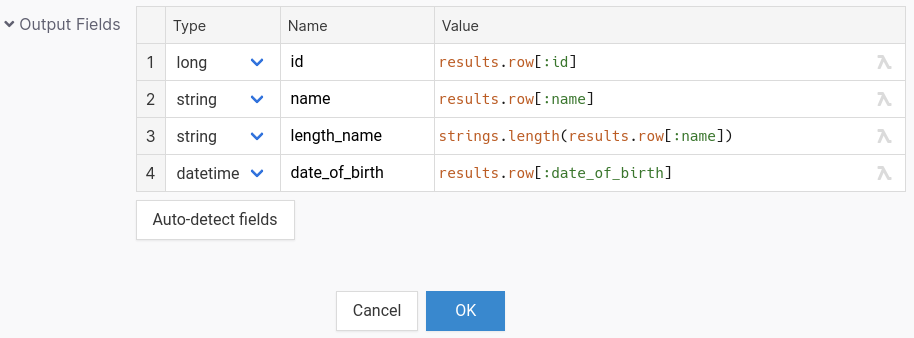

In the next article we will look at the Data Table step in more detail. We also look at which other other steps and we will run the flow and look at the results.

Carpe Diem

RSS Feed

RSS Feed